Creating Frame Processor Plugins

Creating a Frame Processor Plugin for iOS

The Frame Processor Plugin API is built to be as extensible as possible, which allows you to create custom Frame Processor Plugins. In this guide we will create a custom Face Detector Plugin which can be used from JS.

iOS Frame Processor Plugins can be written in either Objective-C or Swift.

Automatic setup

Run Vision Camera Plugin Builder CLI,

npx vision-camera-plugin-builder ios

The CLI will ask you for the path to project's .xcodeproj file, name of the plugin (e.g. FaceDetectorFrameProcessorPlugin), name of the exposed method (e.g. detectFaces) and language you want to use for plugin development (Objective-C, Objective-C++ or Swift).

For reference see the CLI's docs.

Manual setup

- Objective-C

- Swift

- Open your Project in Xcode

- Create an Objective-C source file, for the Face Detector Plugin this will be called

FaceDetectorFrameProcessorPlugin.m. - Add the following code:

#import <VisionCamera/FrameProcessorPlugin.h>

#import <VisionCamera/FrameProcessorPluginRegistry.h>

#import <VisionCamera/Frame.h>

@interface FaceDetectorFrameProcessorPlugin : FrameProcessorPlugin

@end

@implementation FaceDetectorFrameProcessorPlugin

- (instancetype) initWithOptions:(NSDictionary*)options; {

self = [super init];

return self;

}

- (id)callback:(Frame*)frame withArguments:(NSDictionary*)arguments {

CMSampleBufferRef buffer = frame.buffer;

UIImageOrientation orientation = frame.orientation;

// code goes here

return @[];

}

+ (void) load {

[FrameProcessorPluginRegistry addFrameProcessorPlugin:@"detectFaces"

withInitializer:^FrameProcessorPlugin*(NSDictionary* options) {

return [[FaceDetectorFrameProcessorPlugin alloc] initWithOptions:options];

}];

}

@end

The Frame Processor Plugin will be exposed to JS through the VisionCameraProxy object. In this case, it would be VisionCameraProxy.getFrameProcessorPlugin("detectFaces").

- Implement your Frame Processing. See the Example Plugin (Objective-C) for reference.

- Open your Project in Xcode

- Create a Swift file, for the Face Detector Plugin this will be

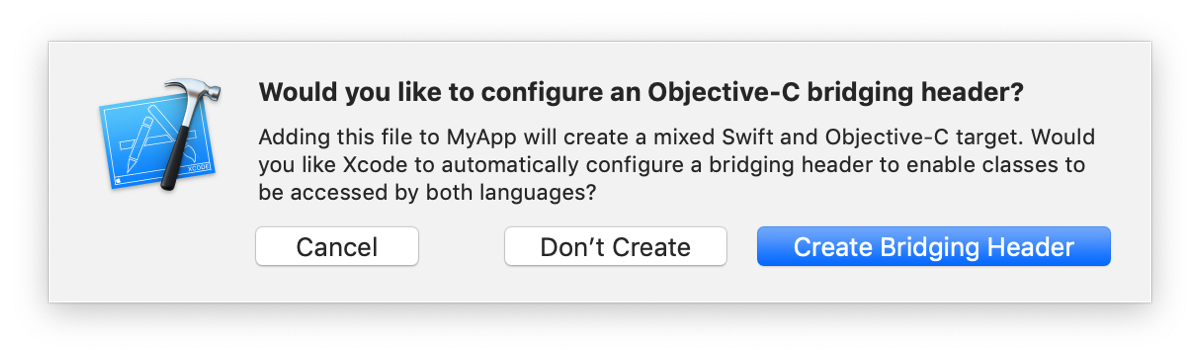

FaceDetectorFrameProcessorPlugin.swift. If Xcode asks you to create a Bridging Header, press create.

- Inside the newly created Bridging Header, add the following code:

#import <VisionCamera/FrameProcessorPlugin.h>

#import <VisionCamera/Frame.h>

- In the Swift file, add the following code:

@objc(FaceDetectorFrameProcessorPlugin)

public class FaceDetectorFrameProcessorPlugin: FrameProcessorPlugin {

public override func callback(_ frame: Frame!, withArguments arguments: [String:Any]) -> Any {

let buffer = frame.buffer

let orientation = frame.orientation

// code goes here

return []

}

}

- In your

AppDelegate.m, add the following imports:

#import "YOUR_XCODE_PROJECT_NAME-Swift.h"

#import <VisionCamera/FrameProcessorPlugin.h>

#import <VisionCamera/FrameProcessorPluginRegistry.h>

- In your

AppDelegate.m, add the following code toapplication:didFinishLaunchingWithOptions::

- (BOOL)application:(UIApplication *)application didFinishLaunchingWithOptions:(NSDictionary *)launchOptions

{

...

[FrameProcessorPluginRegistry addFrameProcessorPlugin:@"detectFaces"

withInitializer:^FrameProcessorPlugin*(NSDictionary* options) {

return [[FaceDetectorFrameProcessorPlugin alloc] initWithOptions:options];

}];

return [super application:application didFinishLaunchingWithOptions:launchOptions];

}

- Implement your frame processing. See Example Plugin (Swift) for reference.